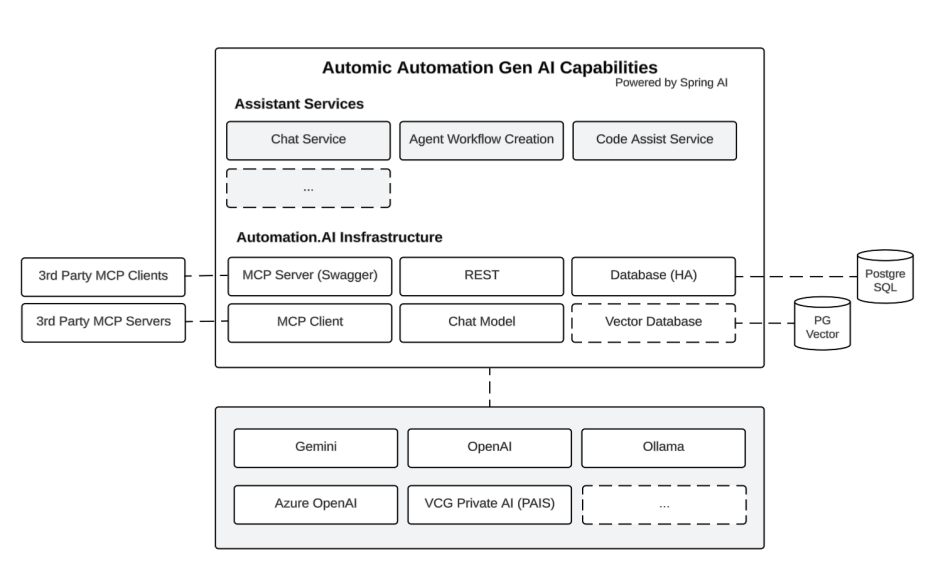

Architecture of the Generative AI Capabilities

Automic Automation’s Gen AI capability is delivered through a dedicated backend component called Automation.AI, which acts as an intermediary between Automic Automation and one or more Large Language Models (LLMs). For on-premises and AAKE environments, Automation.AI must be installed, configured, and connected to the chosen AI service, while Automic SaaS environments come with Automation.AI preconfigured and ready to use. End users communicate solely with Automation.AI rather than directly interacting with the LLM, ensuring that all conversations and data exchanges remain within the secure internal environment.

Automation.AI operates as an AI-platform-agnostic service, connecting to various LLM backends over REST. It receives prompt requests from the Automation Assistant in the Automic Web Interface or the AE REST API and forwards these prompts to the connected LLM, then returns responses transparently. This design allows you to select your preferred AI models in on-premises and AAKE setups, whereas Automic SaaS uses Broadcom’s Gemini model preconfigured. The Automation.AI backend is fully responsible for conversation handling, prompt construction, and data context management, while front-end components like the Automation Assistant or script editor features act as clients invoking Automation.AI services.

See also: